The Fundamentals Still Haven't Changed: Typewriters to Tokens

1956: An MIT laboratory develops a new version of the Whirlwind I for the U.S. Navy, the first computer with a built-in typewriter keyboard for direct input operation, the Friden Flexowriter, making punch card procedures obsolete.

1978: The VT100 is released, the first terminal with a video display to support the ANSI codes used today. There are control codes for the keyboard's lights, reminding me of the the RGBs on my mechanical keyboard that have been a great way to impress women.

1989: The bash terminal shell is released under one of the first open source licenses, combining many of the best features of existing shells that could control the Unix operating system. Two years later, Linus Torvalds ports it to Linux.

2005: Linus Torvalds releases git, an improved system of version control and collaboration for developing a directory of files, though Linus's first commit to its own source code calls it "the information manager from hell."

2011: The idea of a "coding bootcamp" is first seen. The current barrier of entry for code self-education is lower than most people realize. Years later, I eventually attend one of these bootcamps, where I am first taught to use bash and git in macOS's terminal emulator.

2025: Claude Code is released, including the fully terminal-based AI coding interface used popularly by developers of all experience levels. A year later, I attend a vibe coding hype presentation of Claude targeting a non-technical audience that inevitably ends up emphasizing having some familiarity with bash and git.

Sources

What even are the fundamentals of software engineering?

A pattern we see in software development is the stark contrast in the increasingly rapid and dramatic changes to how we work, while some aspects don't seem to ever die.

People hardly seem to agree on which aspects are dead, which are about to die, which are worth spending time on, and so on.

It doesn't help that platforms where general tech discourse is held, like LinkedIn and other social media, reward hot takes and bold predictions more than patient, measured discussion.

When this is the case, it's hard to find agreement on what fundamental skills even are.

To JavaScript, or not to JavaScript

10 years ago, it was very valuable to just be very strong at JavaScript. However, even then, saying that knowing JavaScript is a truly fundamental skill was arguably too superficial.

Now, even the idea that knowing JavaScript is important to write JavaScript is being challenged.

To say that specifically operating the 1978 VT100 terminal is a fundamental skill now is at least obviously ridiculous.

What are we doing today, though? Many of us still decide to emulate the same experience and even use the latest AI in similarly ANSI-compatible terminal software.

Apparently, something stuck there, and there is something to this beyond just receiving software hand-me-downs.

The Practice of Coding: A 7-Foot Toddler

Part of what I believe is difficult about these discussions is the fact that computer science and coding are very young practices in terms of human history.

While we advance technology at never-before-seen rates, we barely have time to process what we've really been doing.

It seems like just as we start to really define development, something comes along that shakes up the details of that narrative, and we're left picking up the pieces to see which are still useful to us, some wanting to throw all those pieces straight into the trash, as if due to personal vendetta.

An Understood Domain of Code: Music

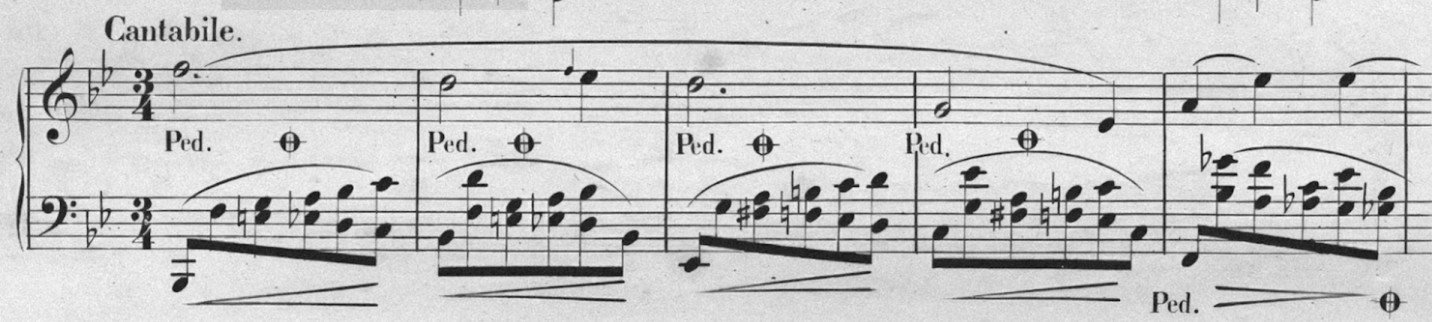

Something many academic musicians are well aware of is the fact that our primary means of communicating about music in great detail (sheet music, musical theory concepts, acoustic science, etc.) is a form of musical code.

One could argue that sheet music is one of the oldest and most sophisticated forms of written code, early forms going back to 2000 B.C.

An Efficient Code

Sheet music is extremely efficient at representing a large amount of music.

One page potentially equates to several minutes worth of music, providing a large variety of instructions for the performer: pitch, duration, key, tempo, volume, harmonic context, rhythmic meter, technique, emotion, free-form comments, and more.

These aspects are all able to be compressed to a relatively short line that's still readable enough for a skilled reader to digest it at sight. Is any of this sounding familiar?

Known Musical Fundamentals

People have been practicing and teaching music for millennia. Thanks to this, a lot is known about the mental and physical development of musical skills. There are exercises and whole thought systems passed down for generations broadly.

That being said, on some level, musical traditions still haven't escaped the bulldozer of tech.

A guitarist can use an electronic tuner instead of their ear. However, most musicians still would agree that this in no way makes hearing when something is out-of-tune obsolete, even for guitarists.

An electronic musician can fully automate rhythm. However, again, most musicians agree that this does not make understanding rhythm obsolete. Many electronic musicians eventually come to value having physical electronic instruments like beat pads so they can capture rhythm and emphasis from their head naturally, using old school tools: their minds and their hands.

The Nature of Fundamentals, in a Sense

The two examples I provided hit two major fundamentals for a musician: A sense of pitch, and a sense of rhythm.

Fundamental skills are often related to some underlying human sense that is applicable regardless of how advanced the technology used on top of it is.

Engineering Senses

It's difficult to truly capture the internal senses we're using as software engineers. Many that can feel it have been trying to express it in a variety of ways despite the difficulty, including myself.

I'm going to attempt here to describe some of the "senses" that engineers use that are still fundamental today. I won't pretend that it's an exhaustive list.

Eye-Rolling Acronyms

DRY. SOLID. YAGNI. Some of these acronyms for engineering practices and principles are practiced dogmatically or dismissed entirely. Dismissal is becoming more prevalent these days.

I think many of these principles are still relevant and beneficial, even if one is heavily relying on AI generation, and these spur from our fundamental engineering senses.

A Sense of Organization

Even if you aren't experienced as a coder, you have some sense of when you are poorly organized, physically or digitally. We learn to organize a room earlier in life on some level, and we also tend to learn lessons with digital organization when we lose or confuse important files for school or work.

Many of the classical engineering principles could be said to be practices of organization.

DRY (Don't Repeat Yourself) and the S in SOLID (Single Responsibility) are a couple of these, and they're closely related.

The DRY idea isn't and has never meant be "Any repetition is automatically bad," and Single Responsibility doesn't mean "Nothing can ever do more than one thing."

The real spirit of DRY is "Code becomes hard to keep track of when too many parts do the same thing," and an aspect of Single Responsibility is "When something has too different of jobs, it's hard to organize it properly."

If you have three copies of an essay for school in different folders on your computer, and you edit one on your USB drive, unplug it, forget you edited it there, then edit the copy in your Documents folder, save it, and then turn in a different copy in your Downloads that appeared when it was time to upload it, you already suffered from a lack of DRY.

Organization and AI

When I engage with AI, I keep these principles in mind. I don't let it repeat code too much, because I see there's a good chance that it misses the fact there's repetition later. That chance grows as the code grows. This coincidentally is the same thing that happens to people who are working with code. I tend to refactor repeated code at the same timing that I would want if I was writing without any AI.

YAGNI (you aren't gonna need it) is a principle that counterbalances over-engineering by discouraging adding unnecessary features or designing features for complexity beyond what is practical. When one can't define a clear scope or purpose for AI's work, it can generate a lot of unnecessary code. That code then will get in its own way later, the same way it does for people. Having precise intent comes from engineering experience that can recognize when a feature is overkill.

An AI tool only works with so much context, just like us, and it never has a perfect memory or history of every engineering decision that you or it made. To me, it has appeared that no matter how smart any AI tool I've used gets, it benefits from the same things humans benefit from when it comes to code organization, and I have not yet experienced a lack of need for this organization to be driven by my own senses and am skeptical about that fundamentally changing.

A Sense of Clarity in Naming

Naming has long been said to be one of the most difficult problems in coding.

This is because a good name succinctly describes what something is, but it strips away a massive amount of detail, only allowing a relatively short sequence of text characters.

If it's too vague, every time you see it, there are too many different things it could be. If it's more specific than what it really is, then it subtly hides aspects of itself. If it's inaccurate, it's inherently confusing.

You've probably experienced issues with naming in other areas of life. I once lived in a city where the electric company was called CPS, who show up on your doorstep with hats and name tags bearing this. It's not the best practice to use a name that is very easily confused with something of the same name without anything extra to qualify it. (Ever deal with the word "client" on its own in a coding context?)

Naming and AI

This matters whether an AI or a human is reading code. This applies when prompting an AI as well, the same as it would confuse someone if you said "I want you to work on my project called My Cool API" that is actually a desktop GUI.

We are not at a point where 100% of code can be trusted blindly to AI, and I'm not going to try to predict a day where that becomes true. I also find it hard to believe that's coming as soon as some people say and in the form they describe it, and there's always the chance we're not really going to get there, just like how ANSI terminals still have not died for around 50 years. Regardless, right now and for probably longer than the biggest hype-drivers say, we're not there.

I had to code and debug bun-workspaces's fancy terminal output

when running scripts in parallel mostly myself, because AI is not well-trained on making fancy terminal

interfaces, and I found that any model I used was pretty terrible at debugging it,

leaving most the real work to me.

This is an extreme example, but I often find that there are important features to write where I at least start them myself or work in hybrid with AI. That means that the names I decide really matter, because they signal intent to the AI like prompts themselves, again in the same way good names help humans understand and build on top of existing code, and the human element is far from irrelevant.

AI also uses a mix of the conventions it's been trained on. Without guiding it with naming conventions of my own, it will use a mix of them. That makes it difficult for me to debug and difficult for it to decide on its own patterns going forward. Naming consistency means that it has less friction on deciding names for itself and understanding existing names based on a pattern, which are, again, the same benefits people get.

A Sense of Fluency

Coding, music, writing: All of these are essentially language skills.

After all, you could say language is a code rather than code is language, when talking about the general idea of code being simply a system of symbolic representation.

There have been studies like this one that have shown that language skills predict coding skills better than math skills.

If you've studied another language at all, you know you can feel a visceral difference between reading your main language and one you aren't as fluent in.

Fluency and AI

At the moment, we're seeing that experienced engineers are the ones often benefitting from new AI development workflows the most.

If this is true, then the argument that fluency in code is no longer needed is backwards. The implication is that having what is sometimes being posited as "traditional" coding fluency is still a valuable thing to learn.

Before AI, I had already been engaging in code reviews and managing several growing projects at a time. Fluency was imperative because no one has time to deeply study the code they've already written or others wrote from the ground up constantly.

Now, I still need this fluency, maybe more than ever. Sometimes I focus it on tests. If AI creates a helper module for me, I want to be able to skim it and understand how it's working. If that module is too complex to fully process in my head, then that's where having tests, code comprised mostly of obvious "when this happens, then this should happen" statements using straightforward syntax becomes even more valuable. This then gives me a place in the code that is easier to read quickly with confidence to know that my project is still in good shape.

Conclusion

I'm in no way trying to gatekeep people from coding with AI. I'm not saying you need to stop everything you're doing just to work on these fundamentals before you "earn" it. But I am saying that I strongly do not believe we're at a point where you can ignore them entirely, and because of the nature of fundamentals themselves, I find it hard to believe that they will actually ever truly go away without just taking some new form.

Fundamentally, git isn't complex because of the complexity of code but due to

the complexity of versioning and collaborating with text-based projects. Text

doesn't die because it's an extremely efficient, established means of communication that works well

for both machines and people, which is part of why modern engineers are still are using software

that looks and acts something like a virtualized 1978 computer console, because

text input and text output have been and still remain king, whether it's terminal stdio,

AI prompting, or sending stupid messages to your loved ones.